As part of the “Mental Health: Sensing & Intervention” (MHSI) 2023 Ubicomp workshop, I was asked to give a “technical” keynote on the state of sensing in mental health. I gave a talk on “Technical Perspectives on Mobile Sensing in Mental Health” and the slides are available below, with a discussion of the key take-away messages.

The talk was divided into three parts;

- BACKGROUND

- Digital Phenotyping

- Copenhagen Research Platform (CARP)

- CHALLENGES

- (Technical) Challenges in Mobile Sensing (in Mental Health)

- … and what to do about them

- LOOKING AHEAD

- What is coming

- How do I see the future of mobile sensing in Mental Health?

The core of the talk was a presentation of the different (technical) challenges that now exist in mobile sensing, and how we have been trying to address these challenges in the CARP Mobile Sensing framework.

As shown above, in the talk I argue that the main (Technical) Challenges in Mobile Sensing are the following:

- The fallacy that smartphones are ubiquitously available.

- It is not straightforward to access data and sensors.

- Background sensing is becoming increasingly difficult.

- It is hard to ensure adherence (from the study participants) to engage in sensing studies.

The Fallacy that Smartphones are Ubiquitously Available

The majority of papers written on mobile sensing and digital phenotyping (including my own papers) often state in the opening remark that the smartphone is a “ubiquitous” computing platform, which is widely available worldwide – often citing statistics on the availability of smartphones both in developed and developing countries. However, even though this might be true from an overall perspective, from a technical point of view, the “smartphone platform” is NOT one unified platform. These smartphones come in many many different hardware versions, operating systems versions, configurations, manufacturers, etc. There are huge differences between iOS and Android, for example, and each of the different OS versions (especially on Android) are configured very differently depending on hardware, API level, manufacturer, etc.

Android allows for collecting a wider range of data than iOS, and the majority of mobile sensing frameworks and applications are hence also designed for Android. However, this is a huge problem, if you target a study population that mainly uses iOS (like we do in Copenhagen). If you only support Android – and you offer the study participants to borrow Android phones – the whole point of “using your own device” fails.

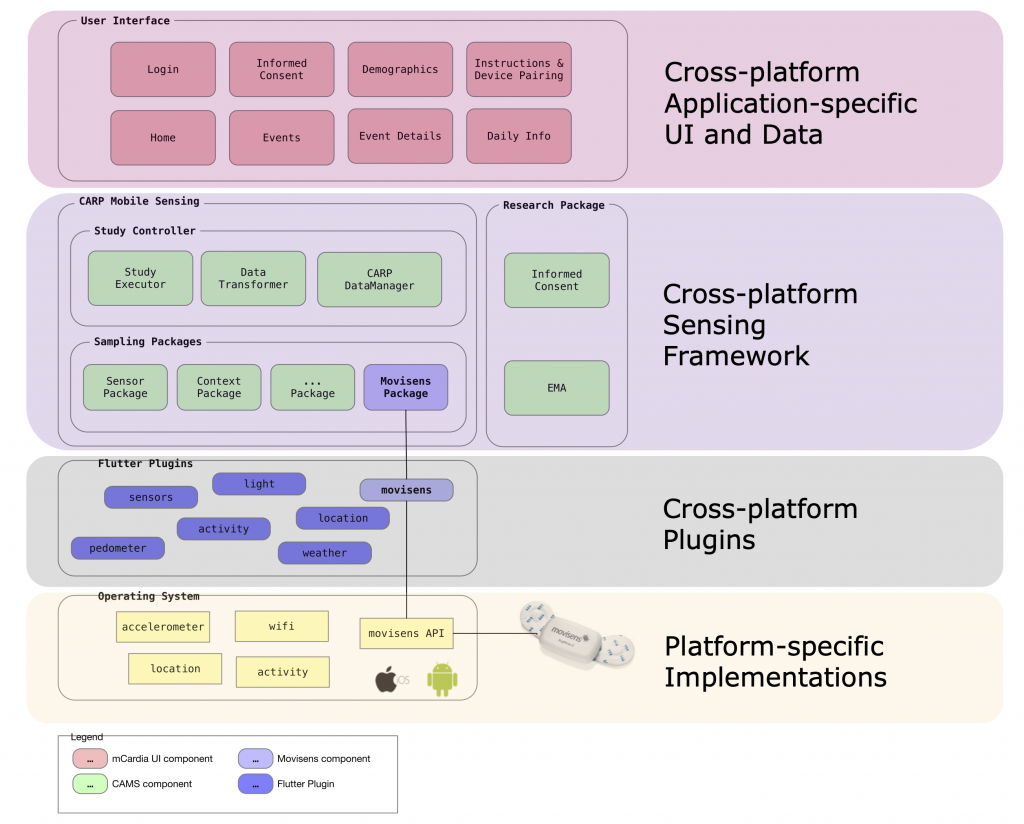

Hence, from a technical point of view, it is important to be able to accommodate this technical heterogeneity in terms of multiple configurations in terms of hardware and OS levels. For this reason, the CARP Mobile Sensing (CAMS) framework is designed as a cross-platform programming framework (leveraging Dart and Flutter), which – to a larger degree – allows for creating mHealth and mobile sensing applications that run on a heterogeneous set of devices.

As shown in Figure 1, the architecture of CAMS allows for creating cross-platform application-specific user interfaces and application logic and sensing logic. The plugin architecture of CAMS allows for OS-specific implementation at the sensor level, ensuring access to any native sensors and data.

Accessing Sensors and OS-level Information

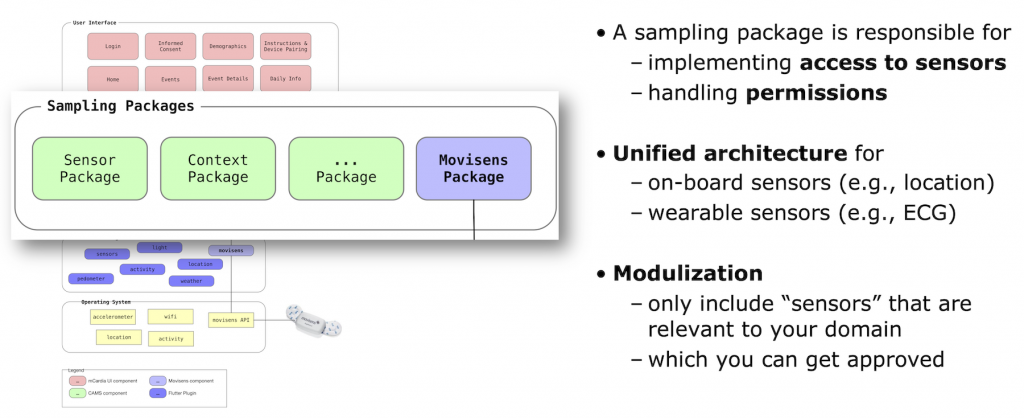

The second challenge to mobile sensing is accessing sensors and platform-specific information in the operating system (OS). With the increasing focus on privacy on smartphones, the operating systems have become increasingly restrictive on what sensors and data an app can access. For example, access to location data on Android has been significantly restricted and complicated on Android 11 and forward. Looking at the different types of OS-level data is not always accessible – for example, it is not possible to get app usage statistics / log data on iOS, and iOS no longer allows apps to collect Wi-Fi connectivity data.

However, the biggest restriction on mobile sensing apps comes from the restrictive approval policies of the Apple AppStore and the Google PLAY Store. In order to get a mobile sensing app “out there” and become “ubiquitous” available, the Apple and Google app stores become a gateway that needs to approve and publish the app. And the policies of these app stores have become increasingly restrictive. For example, the AWARE client is no longer available in the Google app store.

In general, neither Apple nor Google approves apps that collect location information unless the location is used actively in the app. Hence, apps that collect location passively in the background – which is common to most mobile sensing apps – are not approved. Moreover, Apple does not approve apps in the “Health” category for children. For us, this made it impossible to release an app for monitoring and treatment of children with OCD.

The “Sampling Package” architecture of CAMS tries to accommodate this challenge to some degree because this architecture allows the app designer and programmer to only include sampling packages that are needed in the specific mHealth app. Hence, if location information is not used in an app, the “Context” sampling package containing the location probe is not included. This will hopefully enable a more smooth approval of the app in the app stores.

Background Sensing

A common statement in many papers on mobile sensing and digital phenotyping is that data can be collected “unobtrusively” and “in the background”. This statement, however, is becoming increasingly false. A third core challenge to the whole concept of mobile sensing is that background sensing – and background processing in general – is increasingly being restricted both for privacy and battery-saving reasons.

For example, the “Don’t kill my app” homepage now lists most (Android) smartphone manufacturers’ tendency to kill apps in the background, and the trend is clear; for each release of Android, apps are being killed more and more aggressively. Similarly, iOS will kill off backgrounded apps, based on various factors (which are hard to predict). In general, iOS will only collect data when the app is in the foreground (as also evident in the RADAR-base pRMT app).

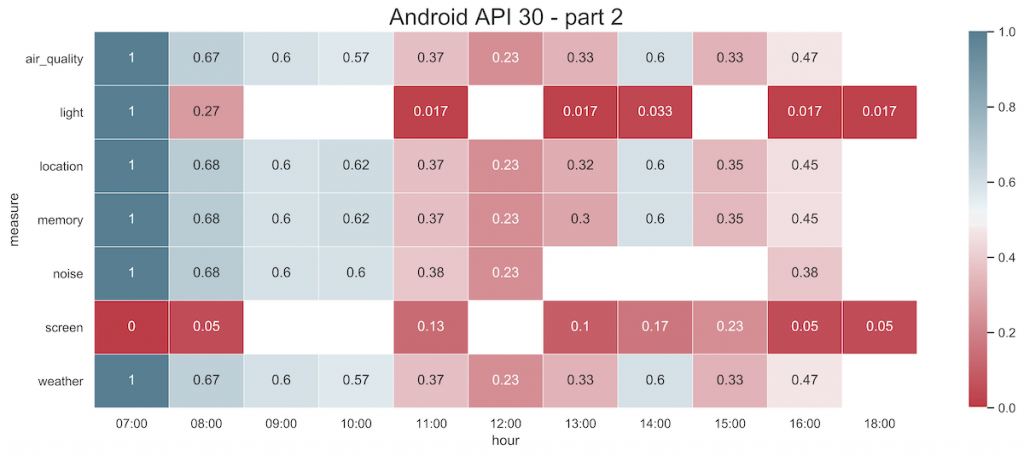

In CAMS, we have been trying to track background sensing and tried to accommodate this in various ways. We also on a regular basis run the so-called “Coverage” tests, which try to establish the degree to which CAMS is collecting the data which is “expected”. For example, Figure 3 shows the coverage of background sensing on Android 11 over a 24-hour window.

The OS’s aggressive killing of apps is hard to combat. However, CAMS is designed to address this in a couple of ways. First, CAMS is not a stand-alone mobile sensing app, but a programming framework that can add mobile sensing to an application-specific app. Hence, by embedding sensing into an app that will be used on a daily basis by the user – and hence brought to the foreground on a regular basis – data collected will happen more continuously. Second, in CAMS the “Study Protocol” specifies what data (“measures”) to collect specify the the sampling strategy (e.g., frequency). In this way, we at least know what is “expected” and know the sampling coverage, which again allows us to analyze it, as shown in Figure 3. Third, CAMS collects “heartbeat” data from each smartphone and its connected devices (such as the ECG device shown in Figure 1). These heartbeat data allow a study manager to monitor if data is being collected as expected.

As an illustration of challenges to background sensing, I used the m-Path Sense application as an example in the talk. In a recent study with N=104 participants over a 22-day study period, the authors show a sampling rate of ~50% for most measures (see Figure 4 in the paper), while also experiencing a large difference between iOS and Android. They conclude that even though the total amount of data collected would be adequate for most studies, it was less than the intended sampling frequency, likely caused by the OS’s attempts to save energy and resources when the device is not in use (eg, Android’s doze mode).

Adherence to Sensing

Adherence to mobile sensing studies has been a recurrent topic in digital phenotyping, and it has been shown to be challenging to engage study participants in the ongoing collection of data. For example, an impressive study from the RADAR-CNS consortium has studied participant engagement over a longitudinal study running for more than a year. Amongst a lot of things, the study shows a “survival curve” where 50% of the participants have “survived” (i.e., are active) after 300 days (Fig. 1 in the paper). Data from one participant (Fig. 2a) illustrate that passive collection of data from smartphones stops after ca. 200 days and data collection is mainly driven by issuing questionnaires to the user.

In the talk, I argue that these findings encourage the strategy of embedding mobile sensing into a domain-specific mHealth application which will be used actively by the user, and hence keep the app and the sensing running in the foreground on a regular basis. This is also the approach used in the m-Path app presented above, which is implemented using CAMS.

Looking Ahead

During the rest of the talk, I discussed the benefits and usefulness of mobile sensing for monitoring, diagnosis, treatment, and the collection of real-world evidence as part of clinical studies. I also advocate for developing more dedicated hardware for the collection of data (in order to avoid the monopoly of the technology and market access from Apple and Google) and for incorporating electrochemical sensors.

I also argued that we – as a community – should start looking beyond sensing and into actuation or interventions. Read more on this in my “From Sensing to Acting—Can Pervasive Computing Change the World?” article in the IEEE Pervasive Computing Magazine.